How speech training data providers handle consent documentation

Explore how leading speech data providers manage consent documentation to ensure compliance and avoid hefty fines.','faq':[{'question':'Why is consent

Speech training data providers handle consent documentation through written, verbal, or digital formats, with leading platforms implementing automated audit logs, DPIA compliance checks, and blockchain-based revocation systems. Modern providers like Luel include consent releases and PII audits in every dataset delivery, while establishing 24-hour provenance SLAs to meet regulatory requirements that can reach €20 million in GDPR fines.

Key Facts

• Regulatory exposure: GDPR violations can trigger fines up to 4% of annual global turnover or €20 million, with HIPAA penalties reaching $1.9 million per violation category

• Consent modalities: Providers use written consent for studio recordings, verbal scripts for fieldwork, and digital platforms for large-scale distributed collection

• Documentation requirements: DPIAs must be completed before dataset acquisition and updated every three years, with mandatory triggers for biometric processing and large-scale voice data collection

• Revocation timelines: Leading providers process consent withdrawals within 7-15 working days, with some offering 24-hour provenance SLAs for audit requests

• Compliance differentiators: 75% of global population data will be covered under privacy regulations by 2024, making transparent consent documentation essential for enterprise buyers

• Emerging technology: Blockchain-based consent management using ERC-721 tokens enables immutable records, automated enforcement, and transparent compensation tracking

Speech data consent documentation now dictates whether an AI voice model ships or stalls. With GDPR fines climbing to €20 million and HIPAA penalties reaching $1.9 million per violation category, bulletproof paperwork around audio rights separates compliant providers from legal liabilities. This guide breaks down how leading speech training data providers document consent, what enterprise buyers should verify, and where emerging technologies like blockchain are reshaping the landscape.

Why consent paperwork is the new North Star for speech datasets

"Voice data has emerged as one of the most powerful and personal forms of information available," notes Way With Words. Under GDPR, any information relating to an identified or identifiable person qualifies as personal data, and voice recordings fall squarely into this category.

Consent documentation serves three interlocking purposes:

Legal protection: Frameworks like GDPR, POPIA, and CCPA require explicit consent for processing personal data, including audio recordings

Ethical compliance: Documenting informed consent ensures participants understand how their voice data will be used, stored, and potentially shared

Technical traceability: Audit-ready consent logs enable buyers to prove provenance when regulators come knocking

The European Data Protection Board has made clear that "AI models trained with personal data cannot, in all cases, be considered anonymous." This ruling means speech datasets cannot simply claim anonymization as a compliance shortcut. Every audio file needs a documented lawful basis, typically explicit consent from the speaker.

By 2024, 75% of the global population will have personal data covered under privacy regulations, making vendor transparency essential for any enterprise AI team.

The cost of skipping robust consent logs: fines, breaches and model collapse

Weak consent documentation creates three categories of risk that compound quickly.

Regulatory penalties: GDPR violations can result in fines of up to 4% of annual global turnover or €20 million, whichever is higher. Healthcare organizations face even steeper exposure, with HIPAA penalties reaching $1.9 million per violation category, per year.

Operational chaos: Without traceable consent records, AI teams cannot respond to data subject access requests within the 30-day GDPR deadline. A single breach affecting 500+ patients triggers mandatory federal reporting and often class-action lawsuits.

Model collapse: Gartner warns that "data can no longer be assumed human or trustworthy by default." When organizations train on unverified or improperly consented data, they risk model collapse where errors and biases amplify through recursive training cycles. The operational reality hits hard: models trained on unlabeled, unvetted synthetic or improperly sourced data drift away from accuracy.

Key takeaway: Consent documentation failures cascade across legal, operational, and technical domains, making upfront investment in robust logging far cheaper than remediation.

From verbal scripts to digital ledgers: documenting consent at scale

Written, verbal, and digital consent: when each makes sense

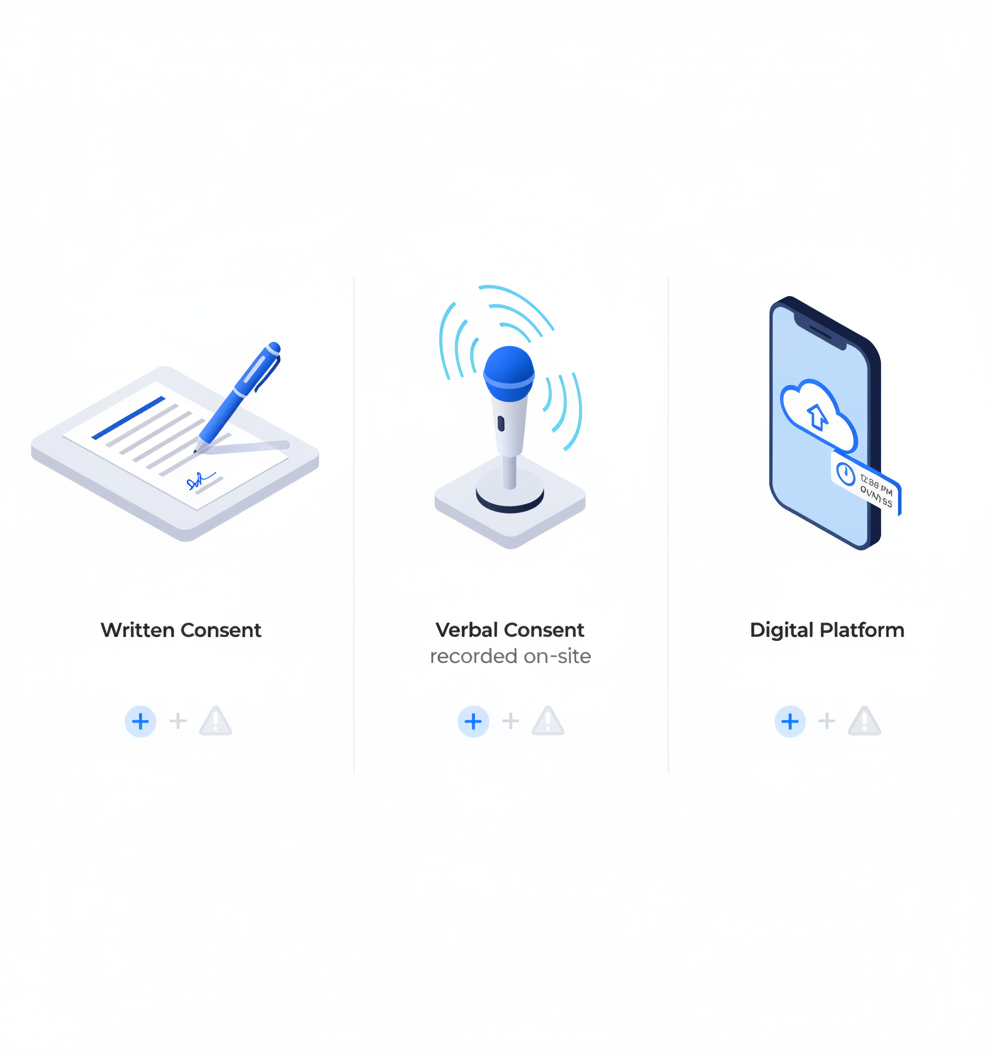

Providers deploy three primary consent modalities, each suited to different collection contexts:

| Consent Type | Best For | Pros | Cons |

|---|---|---|---|

| Written | Controlled studio recordings, formal research | Clear documentation, legally robust | Logistics-heavy for remote collection |

| Verbal | Fieldwork, remote audio collection | Practical for in-the-moment capture | Requires recorded verbal script plus documentation in records |

| Digital | Large-scale distributed collection | Scalable, timestamped, easily audited | Depends on platform integrity |

"Written consent is the traditional and most widely accepted form," according to Way With Words. However, digital consent has become increasingly popular for remote projects where written documentation proves impractical.

For studies receiving expedited approval, IRBs may waive signature requirements when teams provide justified reasons in the protocol. The key: whatever modality you choose, the consent process must be documented in records.

Where DPIAs fit into speech collection campaigns

A Data Protection Impact Assessment is "a process to help you identify and minimise the data protection risks of a project," according to the ICO's accountability guidance.

DPIAs become mandatory when:

Processing is likely to result in high risk to individuals' rights

Large-scale processing of voice data occurs

Biometric information is used for identification

Video devices monitor public areas, per Article 35(3)(c)

DPIA checkpoints for speech campaigns:

- Document nature, scope, and context of processing

- Assess necessity and proportionality

- Identify and evaluate risks to data subjects

- Define mitigation measures

- Update at least every three years or when material changes occur

DPIAs must be completed before acquiring datasets, not as a retrospective checkbox exercise.

Luel vs Appen vs FutureBeeAI: Whose consent stack is enterprise-ready?

| Provider | Consent Artifacts | Revocation SLA | Audit Readiness | Contributor Satisfaction |

|---|---|---|---|---|

| Luel | Consent releases, PII audits, audit logging | 24-hour provenance SLA | Manifests with metadata, transcripts, QA scores | 24-48 hour contributor payments |

| Appen | GDPR adherence claims | Not publicly specified | 165,000+ hours transcribed | TrustScore 1.8/5 |

| FutureBeeAI | Multilingual forms via Yugo platform | 7-15 working days data deletion | ISO 27001 certified, metadata included | 30,000+ global contributors |

| DefinedCrowd | Enterprise compliance focus | Custom enterprise terms | Compliance with data ethics | Human-in-the-loop accuracy |

Case spotlight: Luel's consent manifest and 24-hour provenance SLA

The platform bakes "consent releases, PII audits, and audit logging" into every dataset delivery. Each manifest includes clip metadata, transcripts, QA scores, and direct download links, giving legal teams everything needed to trace provenance in minutes rather than weeks.

The 24-hour provenance SLA means enterprise buyers can respond to regulatory inquiries or data subject requests without scrambling through fragmented vendor systems. For AI teams operating under GDPR's 72-hour breach notification window, this speed matters.

Why legacy workflows at Appen slow audits

Appen's scale is impressive: 165,000+ hours transcribed across 150 locales, 320+ pre-built datasets, and a contributor network exceeding one million people. However, contributor satisfaction tells a different story.

Contributor earnings average just $6.03/hour with widespread complaints about payment delays stretching 15+ days. When contributors feel undervalued, consent quality suffers. Rushed or frustrated participants may not fully comprehend consent terms, creating downstream compliance risks.

The TrustScore of 1.8/5 reflects these structural challenges. For enterprise buyers needing audit-ready consent documentation, legacy vendor processes can become bottlenecks.

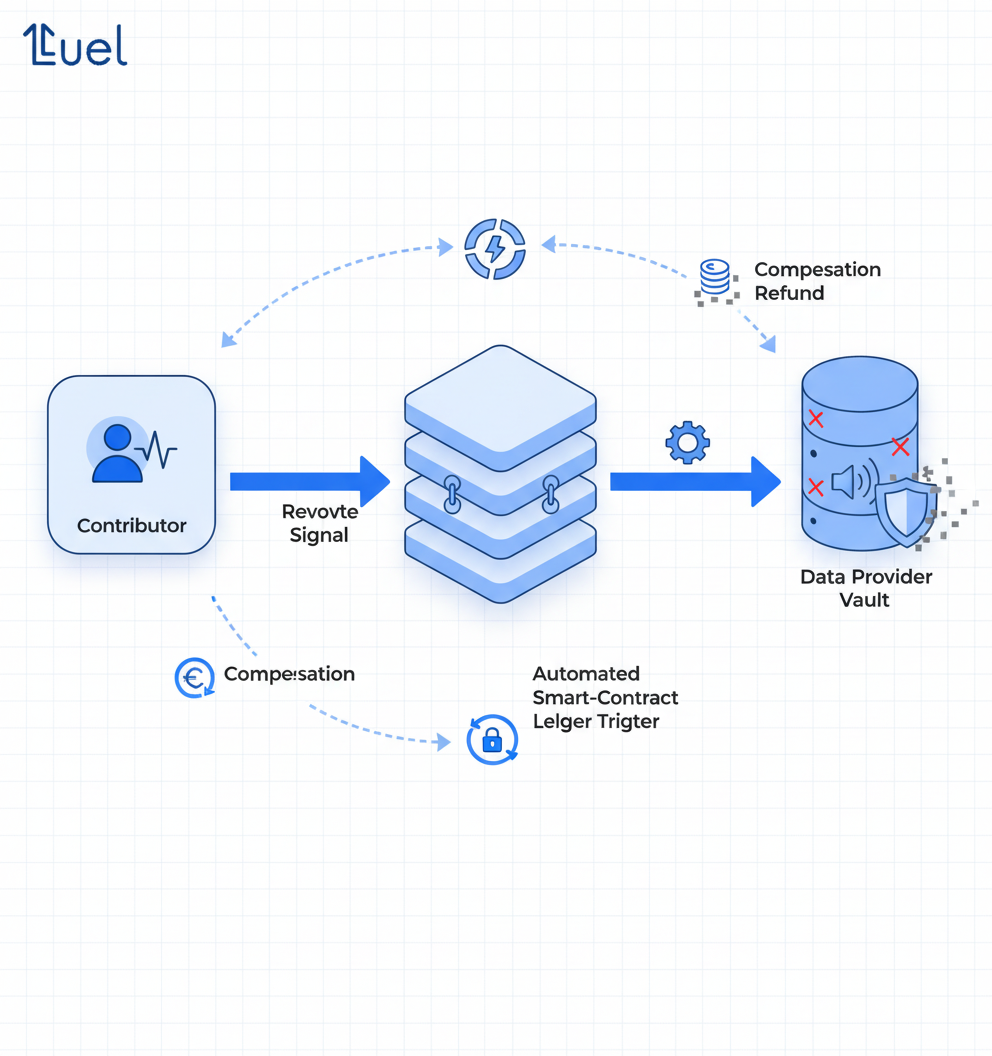

Consent revocation and blockchain proofs: the next frontier

Consent withdrawal enables contributors to revoke data usage rights at any stage of a project. This capability is not optional under GDPR, where individuals retain the right to erasure under Article 17.

Leading providers are implementing increasingly sophisticated revocation mechanisms:

FutureBeeAI: Data deleted or anonymized within 7-15 working days after withdrawal confirmation, with identity verification before processing

IETF vCon standard: Defines consent attachments with structured metadata including temporal validity periods and cryptographic proof mechanisms

OConsent Protocol: Uses ERC-721 based consent tokens with embedded permissions, expiry dates, and compensation terms, verified through decentralized nodes with Zk-proofs

Blockchain-based consent management offers three advantages:

- Immutability: Tamper-proof records of consent grants and revocations

- Automated enforcement: Smart contracts that automatically enforce consent terms

- Compensation transparency: Token-based systems enabling clear value exchange between contributors and data buyers

Permission.io's CEO Charlie Silver notes: "Enterprises know that clean, permissioned data is the foundation of AI... Permission-as-a-Service helps organizations both capture fresh human input and tap into the hidden value of their existing datasets, while compensating individuals for the use of that data."

Mapping the rulebook: GDPR, EU AI Act, CCPA and HIPAA for voice data

| Regulation | Scope | Key Requirements | Penalties |

|---|---|---|---|

| GDPR | EU residents | Explicit consent, 30-day access requests, 72-hour breach notification | Up to €20M or 4% global turnover |

| EU AI Act | High-risk AI systems | Data transparency, visible consent confirmation | Up to €35M or 7% annual revenue |

| CCPA (2026) | California consumers | ADMT opt-out, neural data as sensitive PI, pre-use notices | $7,500 per intentional violation |

| HIPAA | Protected Health Information | BAA required, end-to-end encryption, zero-retention modes | $1.9M per violation category/year |

The California Privacy Protection Agency has intensified enforcement, with record fines exceeding $1.3 million in 2025. The 2026 CCPA updates expand sensitive personal information to include neural data and mandate visible confirmation that opt-out requests have been processed.

Voice recordings containing patient names, medical conditions, or treatment plans qualify as PHI under HIPAA. Any AI voice agent accessing such data requires a signed Business Associate Agreement, with no exceptions.

The EU AI Act, effective August 2025, requires total visibility over AI data supply chains. Violations can reach €35 million or 7% of yearly revenue. Recent enforcement actions include Clearview AI paying €30.5 million for biometric data misuse and OpenAI fined €15 million for collecting data without consent.

Enterprise due-diligence checklist for buying speech datasets

Before signing with any speech dataset provider, run through these ten verification points:

Request sample consent releases with participant signatures or digital proofs

Verify DPIA completion date and scope for datasets involving voice biometrics

Confirm revocation workflow SLA: How quickly is data purged after withdrawal requests?

Check cross-border transfer safeguards: Are new SCCs from June 2021 in place?

Review audit logs: When was each file's consent last verified?

Examine de-identification processes: Face blurring, pseudonymization, anonymization methods

Verify controller/processor designations in licensing contracts

Assess transparency reporting: Does the vendor provide accessible dataset cards?

Check contributor payment timelines: Delayed payments correlate with consent quality issues

Request BAA availability if datasets may contain healthcare-related content

"Buyers should prioritize vendors providing verifiable consent mechanisms, current DPIAs, active transfer safeguards, and accessible dataset cards," advises Luel's compliance guide.

Vendors unable to supply consent documentation within 72 hours signal hidden compliance gaps. The shortcut of scraping public data creates particular risk: "publicly available does not automatically mean free to use for training."

Key takeaways: Turning consent logs into a competitive edge

Consent documentation has evolved from legal checkbox to operational differentiator. Providers who treat consent as "product architecture" rather than paperwork, as FutureBeeAI describes their approach, build datasets that withstand regulatory scrutiny.

The evidence points clearly:

Weak consent documentation compounds into regulatory, operational, and model quality failures

Written, verbal, and digital consent each serve specific collection contexts

DPIAs must precede dataset acquisition, not follow it

Revocation capabilities and blockchain proofs are becoming table stakes

Enterprise buyers need systematic due diligence beyond vendor claims

For AI teams requiring compliant speech data with traceable provenance, Luel's marketplace delivers consent releases, PII audits, and audit logging by default. The 24-hour provenance SLA and 3M+ global contributor network enable fast, rights-cleared data collection without the compliance gaps that plague legacy vendors. When the regulatory environment demands proof of consent at every layer, starting with audit-ready datasets eliminates remediation costs downstream.

Frequently Asked Questions

Why is consent documentation crucial for speech datasets?

Consent documentation is essential for legal protection, ethical compliance, and technical traceability. It ensures that personal data, including voice recordings, is processed with explicit consent, meeting regulations like GDPR and CCPA.

What are the risks of inadequate consent documentation?

Inadequate consent documentation can lead to regulatory penalties, operational chaos, and model collapse. Without proper records, organizations face fines, lawsuits, and compromised AI model accuracy due to unverified data.

How do speech data providers document consent?

Providers use written, verbal, and digital consent methods. Written consent is legally robust, verbal consent is practical for fieldwork, and digital consent is scalable and easily audited, depending on the project's needs.

What role do DPIAs play in speech data collection?

Data Protection Impact Assessments (DPIAs) help identify and minimize data protection risks. They are mandatory for high-risk processing, large-scale voice data collection, and when biometric data is used.

How does Luel ensure compliance in speech data collection?

Luel integrates consent releases, PII audits, and audit logging into every dataset delivery, offering a 24-hour provenance SLA to ensure fast, compliant data collection and response to regulatory inquiries.

Sources

- https://www.luel.ai/datasets

- https://ainora.lt/blog/ai-voice-agent-gdpr-compliance-guide

- https://www.luel.ai/blog/gdpr-compliant-multimodal-data-comparing-ai-training-data-providers

- https://mediaffy.com/hipaa-compliance-ai-voice-agents/

- https://waywithwords.net/resource/how-does-gdpr-apply-to-speech-datasets/

- https://waywithwords.net/resource/informed-consent-in-audio-datasets/

- https://www.findarticles.com/gartner-warns-ai-self-poisoning-and-outlines-a-cure/

- https://hrpp.research.virginia.edu/teams/irb-sbs/researcher-guide-irb-sbs/consent/oralverbal-consent

- https://www.luel.ai/blog/gdpr-compliance-checklist-for-off-the-shelf-egocentric-video-datasets

- https://natlawreview.com/article/california-finalizes-groundbreaking-regulations-ai-risk-assessments-and-0

- https://www.luel.ai/blog/luel-vs-appen-for-speech-data-which-ai-training-data-provider-wins

- https://www.futurebeeai.com/knowledge-hub/withdraw-consent-data-collection

- https://aichief.com/ai-business-tools/definedcrowd/

- https://www.ietf.org/archive/id/draft-howe-vcon-consent-00.html

- https://oconsent.io/

- https://transcend.io/blog/ai-auditability-can-you-trace-your-training-data

- https://natlawreview.com/article/new-updates-ccpa-regulations-californias-focus-admt-cybersecurity-audits-risk

- https://secureprivacy.ai/blog/ccpa-requirements-2026-complete-compliance-guide

- https://www.innopulse.io/insights-data-protection-compliance-ai-training-data/

- https://www.futurebeeai.com/blog/informed-consent-in-ai-data-collection