Luel vs Appen for speech data: Which AI training data provider wins?

Explore the strengths of Luel and Appen in providing AI training data, focusing on quality, compliance, and contributor satisfaction.

Luel offers faster payment (24-48 hours vs reported 15+ day delays), built-in compliance infrastructure, and higher contributor satisfaction compared to Appen, whose TrustScore dropped to 1.8/5 amid quality control issues. While Appen maintains 1M+ contributors across 500+ languages, recent feedback indicates payment delays and support gaps have eroded data quality and contributor morale.

At a Glance

- Quality metrics matter: Poor training data costs organizations $12.9 million annually, with speech-specific issues like short utterances (35 languages in Mozilla Common Voice have median duration under 4 seconds) directly impacting ASR performance

- Appen's scale vs satisfaction trade-off: Despite 165,000+ hours transcribed and 320+ pre-built datasets, contributor earnings average just $6.03/hour with widespread complaints about payment delays

- Luel's marketplace approach: Delivers rights-cleared, quality-audited data with 24-48 hour contributor payments and built-in GDPR/HIPAA compliance infrastructure

- Compliance is non-negotiable: Voice data qualifies as personal data under GDPR requiring explicit consent, with healthcare breaches averaging $10.93 million per incident

- Contributor experience drives quality: When platforms lose contributor engagement through payment delays or poor support, micro-level issues like inadequate quality control multiply in the resulting datasets

Choosing the right speech data provider directly affects model accuracy, regulatory compliance, and time-to-market. Poor data quality alone costs organizations an average of $12.9 million per year, and when training data fails to capture diverse accents, natural speech patterns, or proper consent documentation, the downstream ASR models inherit every flaw.

Two names dominate the conversation: Appen, the publicly traded Australian company with 25+ years in the industry, and Luel, a Y Combinator-backed marketplace founded in 2025 that promises rights-cleared, quality-audited multimodal data at enterprise speed. This comparison examines quality metrics, contributor sentiment, compliance infrastructure, and delivery workflows to help enterprise AI teams decide which provider fits their speech data needs in 2026.

Why does the speech data provider you pick decide model success?

The quality of training data shapes every downstream metric an ASR system produces. Micro-level issues such as short utterances, transcription errors, and inconsistent metadata stem from inadequate quality control during collection. Macro-level problems arise when dataset designers overlook the sociolinguistic context of the languages they capture.

Gartner defines data quality as "the usability and applicability of data used for an organization's priority use cases—including AI and machine learning initiatives." But traditional high-quality data standards do not automatically translate to AI-ready data. As Gartner notes, AI-ready data must be representative of every pattern, error, and outlier the model will encounter in production.

For speech specifically, that means capturing regional accents, ambient noise profiles, emotional variation, and domain-specific vocabulary. When datasets miss these elements, word error rates climb and models fail to generalize.

What quality metrics define reliable speech data?

ASR quality is typically measured by word error rate (WER), defined as "the Levenshtein distance between the target transcript and the machine-generated transcript" (ACL Anthology). State-of-the-art systems now achieve WER as low as 1.4% on benchmarks like Librispeech, yet optimizing for WER alone ignores latency, robustness across domains, and demographic fairness.

Beyond WER, reliable speech datasets require:

Utterance duration: Short prompts reduce the proportion of actual speech. In Mozilla Common Voice 17.0, 35 languages have median utterance duration under 4 seconds, limiting contextual learning.

Metadata richness: Timestamps, speaker labels, background noise tags, and emotional markers enable fine-grained model tuning.

Transcription accuracy: Amazon research demonstrates that confidence-based reprocessing and automatic word error correction can reduce transcription WER by over 50%, yielding a 10% relative WER improvement for trained ASR models.

Speaker and language diversity: the hidden WER driver

Lack of speaker diversity is a final micro-level issue that audits consistently flag. When datasets draw from a narrow pool of contributors, models struggle with unfamiliar accents, age groups, or speaking styles.

Sociolinguistic phenomena compound the problem. Digraphia—where a language uses multiple writing systems—and diglossia—where formal and everyday speech varieties differ—require explicit dataset planning. Without it, models trained on formal text may fail on conversational audio.

Key takeaway: Reliable speech data demands more than low WER on controlled benchmarks; it requires diverse speakers, rich metadata, and sociolinguistic awareness baked into collection design.

Appen in 2026: has scale come at the cost of quality?

Appen touts impressive numbers: 165,000+ hours of audio transcribed across 150 locales at 99.5% accuracy, 320+ pre-built datasets covering 80+ languages, and a contributor network exceeding one million people in 500+ languages and dialects. The company has completed over 20,000 AI projects and processed 100 million LLM data elements.

Yet recent contributor and client feedback paints a different picture. Trustpilot reviews give Appen a TrustScore of 1.8 out of 5, with most reviewers unhappy overall. Common complaints include payment delays, support gaps, and project cancellations.

What do contributors and clients say about Appen?

On Trustpilot, one contributor wrote: "This company is clearly exploiting its innocent workers. They have big-name clients like Microsoft and AWS, but the pay rates they offer their workers are a ridiculous joke" (Trustpilot).

Another noted: "Since the introduction of CrowdGen in September, many things have been going wrong" (Trustpilot).

Glassdoor data shows a 3.7 out of 5 employee rating, with 71% recommending the company to a friend. However, some reviews are blunt: "Appen is a total scam and ripoff of your valuable time" (Glassdoor).

Contributor earnings average $6.03 per hour with a median of $4.00, and monthly earnings average $46.36. While 85.71% of contributors recommend the platform, many note confusing onboarding and inconsistent project availability.

Unmanaged crowdsourcing can produce short, low-diversity utterances that raise word error rates. When contributor morale drops, data quality often follows.

How does Luel's marketplace eliminate speech-data pain points?

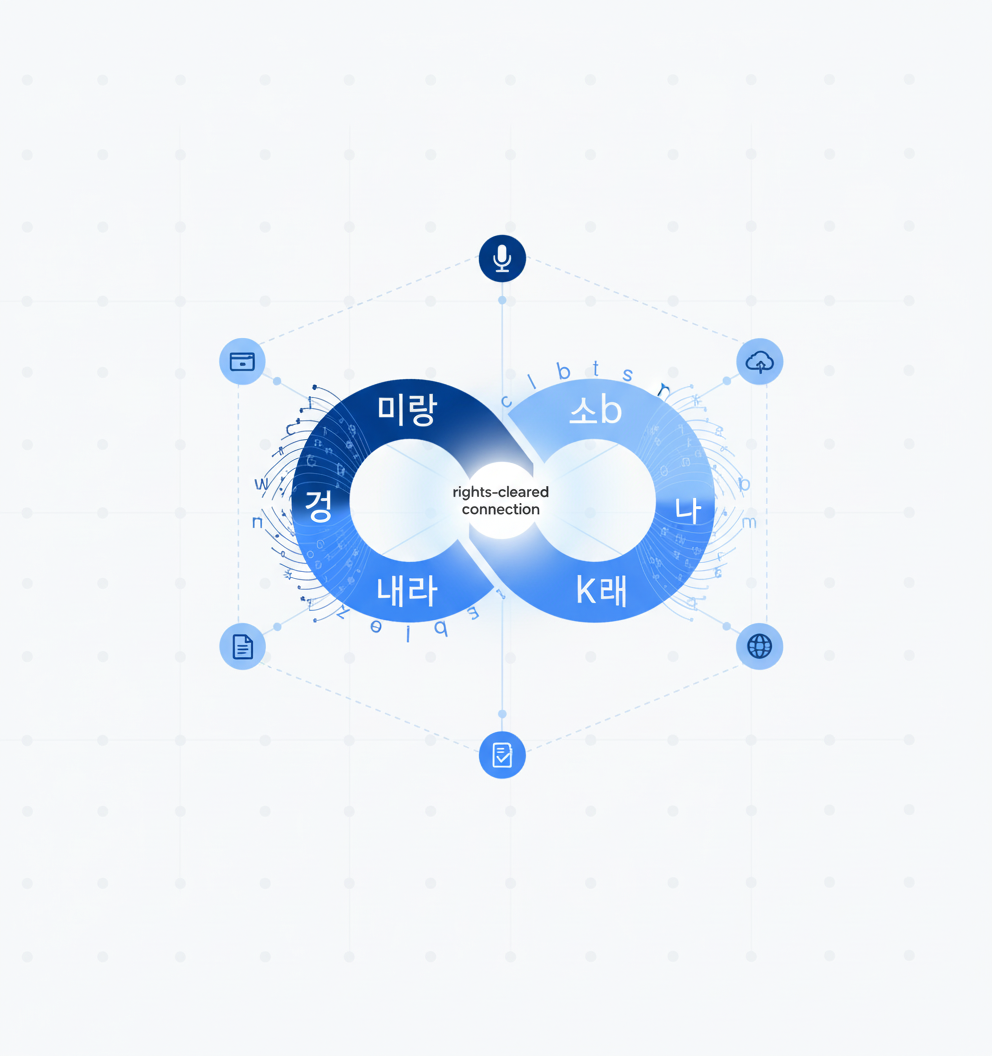

Luel operates a two-sided marketplace connecting AI teams with a global network of vetted contributors. The platform focuses on video, audio, and voice recordings, delivering curated, rights-cleared training data with full provenance.

Every dataset is rights-cleared, quality audited, and delivered with enterprise support. Luel sources from vetted contributors, maintains consent logs, and cross-checks every file for duplicates, safety issues, and instruction compliance. Delivery includes JSON manifests with clip metadata, transcripts, QA scores, and direct S3 download links.

Contributors receive payment within 24-48 hours after approval, which keeps the contributor pool engaged and data flowing. AI-powered and manual expert quality checks address the transcription errors and metadata gaps that plague crowdsourced platforms.

Datasets span professional meeting conversations, doctor-patient consultations, spontaneous and scripted monologue speech, multilingual contact center recordings, and expressive TTS voice collections in languages like Telugu and Spanish.

Built-in compliance and privacy controls

Voice data carries unique privacy risks. Under GDPR, voice qualifies as personal data requiring explicit consent before recording. Healthcare data breaches cost an average of $10.93 million per incident.

Luel bakes compliance into every dataset: consent releases, PII audits, and audit logging included by default. This contrasts with recent litigation in the speech-to-text space. A class-action lawsuit accuses Otter.ai of recording private conversations without permission, alleging violation of state and federal wiretap laws.

For enterprise AI teams operating under HIPAA, GDPR, or CCPA, built-in compliance infrastructure reduces legal exposure and accelerates procurement.

Luel vs Appen: which provider wins on speed, quality and cost?

| Factor | Appen | Luel |

|---|---|---|

| Contributor network | 1M+ across 500+ languages | 3M+ global contributors |

| Pre-built datasets | 320+ datasets, 13,000+ hours | Curated enterprise collections |

| Transcription accuracy | Claims 99.5% on historical projects | AI-powered + manual QA checks |

| Contributor payout speed | Reported delays of 15+ days | 24-48 hours after approval |

| Compliance | Varies by project | Consent logs, PII audits, audit logging standard |

| Delivery format | Custom arrangements | JSON manifests, S3 links, structured metadata |

| Recent sentiment | TrustScore 1.8/5, payment and support complaints | Y Combinator W26, enterprise-focused |

Appen's 2024 State of AI report surveyed over 500 IT decision-makers and found companies reporting a 10% rise in data sourcing bottlenecks, a 9% drop in data accuracy, and a 7% increase in data availability challenges. Over 90% of respondents now seek partners with expertise across the full AI data lifecycle.

Luel addresses these pain points by operating as an end-to-end vendor handling sourcing, QA, legal, and delivery. Flexible licensing models—flat fee, per minute, or revenue share—accommodate different budget structures.

Checklist: how to vet your next speech data partner

Before signing a contract, evaluate potential providers across these dimensions:

AI-readiness: Does the provider understand that AI-ready data must be representative, not just clean? Gartner emphasizes assessing data needs by use case, not generic quality standards.

Compliance infrastructure: Ask for documentation of consent workflows, PII handling, and audit trails. Regulations vary by jurisdiction; your provider should demonstrate fluency in GDPR, HIPAA, and CCPA requirements.

Sociolinguistic coverage: Request details on speaker demographics, accent diversity, and domain balance. Digraphia and diglossia considerations matter for multilingual projects.

Quality dimensions: Gartner identifies nine common data quality dimensions—accessibility, accuracy, completeness, consistency, precision, relevancy, timeliness, uniqueness, and validity. Ask how the provider measures each.

Contributor experience: Unhappy contributors produce lower-quality data. Review Glassdoor and Trustpilot sentiment, and ask about payout timelines.

Delivery and governance: Confirm delivery formats, metadata schemas, and integration with your ML pipeline. Scale and govern from day one.

Key takeaways

Appen built its reputation over 25+ years and still handles massive volumes of audio data. However, contributor complaints, payment delays, and the CrowdGen rollout have eroded trust. When crowdsourcing platforms lose contributor engagement, data quality suffers.

Luel's marketplace model prioritizes compliance, speed, and contributor satisfaction. Every collection is rights-cleared with consent logs and PII audits, and contributors receive payment within 24-48 hours.

The platform delivers structured metadata and direct download links, eliminating the export headaches that slow enterprise AI timelines. For AI teams requiring compliant, high-quality speech data at enterprise speed, Luel offers a more reliable, enterprise-ready pipeline than legacy providers struggling with scale-induced quality decline.

Frequently Asked Questions

What are the key differences between Luel and Appen for AI training data?

Luel offers a marketplace model with a focus on compliance, speed, and contributor satisfaction, providing rights-cleared, quality-audited data. Appen, while experienced, faces challenges with contributor complaints and payment delays, affecting data quality.

How does Luel ensure data quality and compliance?

Luel ensures data quality and compliance by using vetted contributors, maintaining consent logs, and performing AI-powered and manual quality checks. They provide structured metadata and direct download links, ensuring data is rights-cleared and compliant with regulations like GDPR and HIPAA.

What are the common complaints about Appen's services?

Common complaints about Appen include payment delays, support gaps, and project cancellations. Contributors have expressed dissatisfaction with low pay rates and inconsistent project availability, which can impact data quality.

How does Luel's contributor network compare to Appen's?

Luel boasts a global network of over 3 million contributors, offering fast and compliant data collection. In contrast, Appen has a network of over 1 million contributors but faces challenges with contributor engagement and satisfaction.

Why is speaker and language diversity important in speech data?

Speaker and language diversity are crucial for reducing word error rates and ensuring models can generalize across different accents, age groups, and speaking styles. Diverse datasets help capture sociolinguistic phenomena, improving model performance in real-world applications.

Sources

- https://www.trustpilot.com/review/appen.com

- https://www.gartner.com/en/data-analytics/topics/data-quality

- https://aclanthology.org/2025.acl-long.370.pdf

- https://www.gartner.com/en/articles/ai-ready-data

- https://aclanthology.org/2021.bppf-1.4.pdf

- https://assets.amazon.science/46/ff/f3125258493282df27566ec8356c/human-transcription-quality-improvement.pdf

- https://www.appen.com/ai-data/audio-data

- https://www.appen.com/llm-training-data

- https://www.glassdoor.com/Reviews/Appen-Reviews-E667913.htm

- https://www.swiftsalary.com/platform/appen/earner-reviews/

- https://www.luel.ai/enterprise

- https://luel.ai/

- https://deepgram.com/learn/speech-to-text-privacy

- https://www.npr.org/2025/08/15/g-s1-83087/otter-ai-transcription-class-action-lawsuit

- https://www.appen.com/