GDPR-compliant multimodal data: Comparing AI training data providers

Explore GDPR compliance in AI training data, comparing providers on transparency and legal documentation for multimodal datasets.

Comparing GDPR-compliant multimodal data providers reveals significant transparency gaps between vendor claims and documented compliance. While most providers advertise GDPR adherence, few can produce comprehensive documentation including Data Protection Impact Assessments, lawful basis registers, and consent audit trails that AI-ready data requires for regulatory compliance and safe model deployment.

TLDR

- True GDPR compliance for multimodal AI data requires documented lawful basis, DPIAs, cross-border transfer safeguards, and transparency reporting beyond basic platform certifications

- Model-centric providers like OpenAI and Mistral focus on API usage DPAs rather than pre-training dataset provenance documentation

- Voice and annotation specialists demonstrate stronger modality-specific compliance but buyers should still request explicit DPIA documentation and consent trails

- 75% of the global population will have personal data covered under privacy regulations by 2024, making vendor transparency essential

- EU AI Act enforcement begins August 2025, requiring providers to document technical information and demonstrate readiness for additional transparency obligations

- Buyers should prioritize vendors providing verifiable consent mechanisms, current DPIAs, active transfer safeguards, and accessible dataset cards

AI teams building next-generation multimodal models face a growing problem: finding training data that meets evolving privacy regulations while maintaining the quality needed for production systems. As regulatory scrutiny intensifies, the gap between what providers claim about compliance and what they actually demonstrate has become a critical business risk.

This comparison examines how leading AI training data providers stack up on GDPR compliance transparency, offering a framework for evaluating vendors and identifying the documentation you should demand before signing any contract.

What does "GDPR-compliant multimodal data" mean today?

GDPR-compliant multimodal data goes far beyond traditional notions of "high-quality" datasets. According to Gartner, "AI-ready data means that your data must be representative of the use case, of every pattern, errors, outliers and unexpected emergence that is needed to train or run an AI model for a specific use." But representativeness alone does not satisfy regulators.

True compliance requires organizations to comply with evolving AI regulations, including both the AI EU Act and GDPR. This means every dataset must be collected on a valid legal basis, documented through impact assessments, and protected when crossing borders.

The transparency gap among providers is stark. Gartner predicted that by 2024, 75% of the global population would have their personal data covered under privacy regulations. Yet many AI data vendors still treat compliance documentation as an afterthought rather than a core deliverable.

By 2023, Gartner predicted that 30% of consumer-facing organizations would offer self-service transparency portals for preference and consent management. For AI training data buyers, this signals a maturing expectation: if your data provider cannot show you where data came from and how consent was obtained, they are likely behind industry standards.

Why does GDPR matter for multimodal AI pipelines?

The stakes for non-compliance extend well beyond regulatory fines. The European Data Protection Board has made clear that "AI models trained with personal data cannot, in all cases, be considered anonymous." This single statement upends assumptions many AI teams hold about the safety of using scraped or purchased datasets.

The UK ICO guidance emphasizes that "the development and deployment of AI systems involve processing personal data in different ways for different purposes. You must break down and separate each distinct processing operation, and identify the purpose and an appropriate lawful basis for each one, in order to comply with the principle of lawfulness."

For multimodal pipelines combining video, audio, and voice data, this creates layered compliance requirements. Voice recordings inherently contain biometric identifiers. Video datasets may capture faces, license plates, or other personal information. Each modality demands its own lawful basis analysis.

"'High-quality' data — as judged by traditional data quality standards — does not equate to AI-ready data," notes Gartner. A dataset can be technically excellent while remaining legally unusable if its provenance cannot be demonstrated.

Key takeaway: Compliance is not a checkbox but a continuous obligation spanning the entire data lifecycle from collection through model deployment.

Which compliance pillars should AI data providers prove?

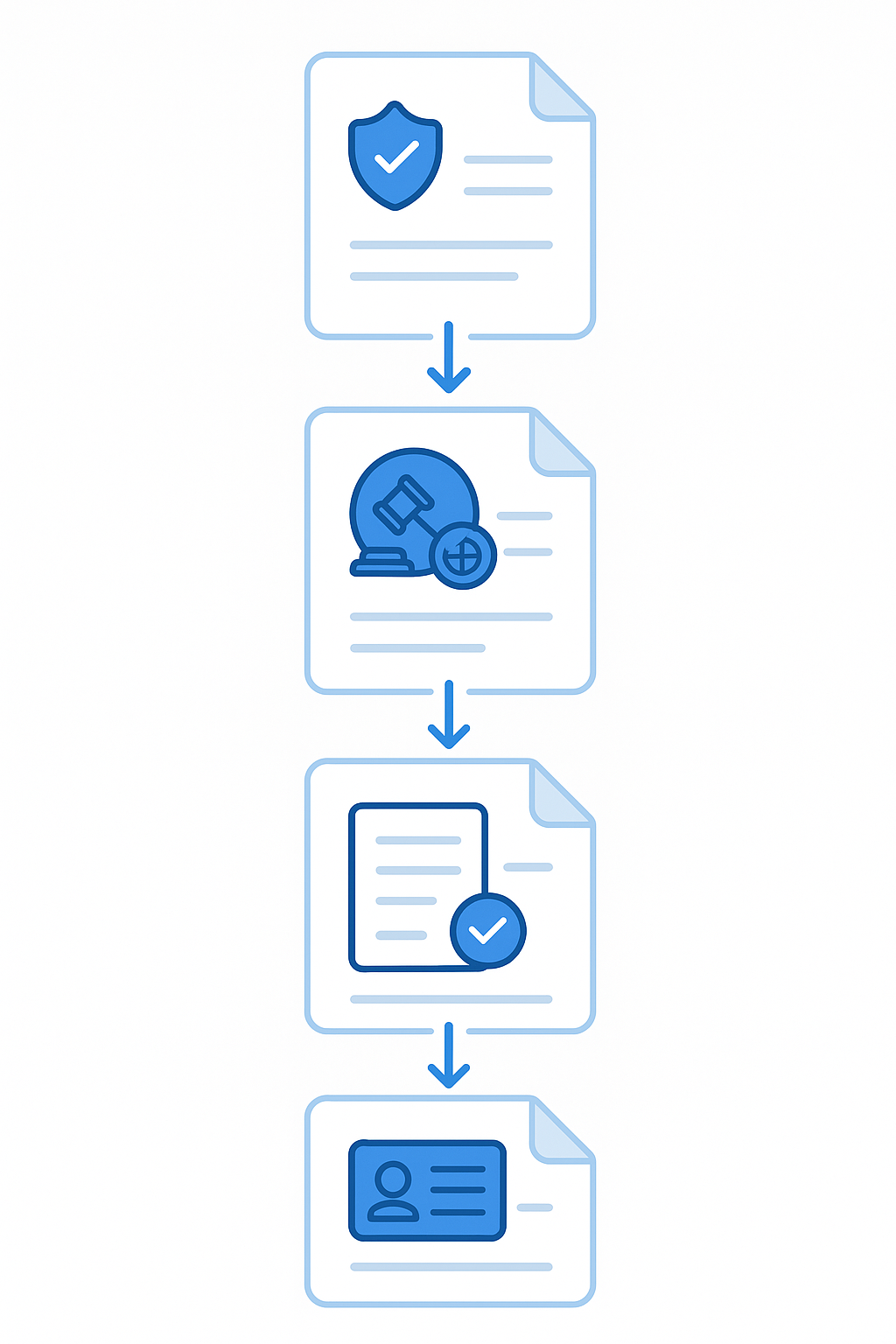

Buyers should evaluate data providers against four fundamental pillars: lawful basis documentation, Data Protection Impact Assessments, cross-border transfer safeguards, and transparency reporting.

1. Lawful basis & consent

The UK ICO states plainly: "Whenever you are processing personal data — whether to train a new AI system, or make predictions using an existing one — you must have an appropriate lawful basis to do so."

The EDPB has adopted guidance clarifying that a three-step test helps assess the use of legitimate interest as a legal basis. This test requires providers to demonstrate:

- A legitimate interest exists

- Processing is necessary to achieve that interest

- The interest does not override data subject rights

For multimodal data involving voice or video, consent typically provides the clearest path. Providers should document explicit consent mechanisms, compensation structures, and ongoing control mechanisms for contributors.

2. DPIAs & high-risk processing

A Data Protection Impact Assessment is "a process to help you identify and minimise the data protection risks of a project," according to the ICO's accountability guidance. For AI training data, DPIAs are typically mandatory.

The EDPS specifies that a DPIA is required for "systematic and extensive evaluation of personal aspects relating to natural persons based on automated processing" and "processing on a large scale of special categories of data." Most multimodal AI training operations meet these thresholds.

Data providers should be able to share recent DPIAs covering their collection and annotation workflows. If a provider cannot produce this documentation, they may be operating outside regulatory requirements.

3. Cross-border transfers & SCCs

When training data crosses borders, Standard Contractual Clauses become essential. The European Commission's implementing decision establishes that "the standard contractual clauses set out in the Annex to this Decision combine general clauses with a modular approach to cater for various transfer scenarios and the complexity of modern processing chains."

The EDPB has published final guidelines clarifying that "judgements or decisions from third country authorities cannot automatically be recognised or enforced in Europe." This means data providers with global contributor networks must demonstrate active transfer safeguards, not just contractual boilerplate.

Buyers should request current SCCs, evidence of Transfer Impact Assessments, and documentation of supplementary measures for transfers to jurisdictions without adequacy decisions.

Our transparency-first comparison methodology

This evaluation draws on frameworks developed by the Open Data Institute and industry governance standards. The ODI developed the AI Data Transparency Index (AIDTI), "a maturity assessment framework designed to evaluate the level of data transparency across AI models."

The AIDTI categorizes providers into maturity levels based on documentation practices: "High maturity: Demonstrated by five model providers, characterised by detailed accessible documentation, consistent use of transparency tools, and a proactive approach to explaining decisions made in the development process."

Forrester's Data and AI Governance Model emphasizes that "organizations must balance robust governance with broad democratization, all managed through a product mindset that treats every dataset or model as a customer-focused product." This balance delivers five strategic outcomes: security, privacy, compliance, self-service, and discovery.

Our evaluation criteria include:

| Criterion | What to look for |

|---|---|

| Lawful basis register | Documented legal basis for each data modality |

| DPIA availability | Recent assessments covering collection workflows |

| SCC/BCR documentation | Current transfer mechanisms with supplementary measures |

| Consent audit trails | Verifiable records of contributor permissions |

| Transparency reporting | Public or customer-accessible dataset cards |

How do leading AI training data providers stack up on GDPR?

The market includes model-centric providers offering DPAs primarily for API usage, hybrid platforms combining labeling infrastructure with managed services, and specialists focused on specific data modalities. Each category presents different compliance characteristics.

OpenAI & Mistral: model-centric DPAs

OpenAI's Data Processing Addendum establishes that OpenAI acts as a Data Processor on the customer's behalf. The DPA covers API and ChatGPT Enterprise services, specifying compliance with GDPR, CCPA, and various U.S. state privacy laws.

Mistral AI's DPA explicitly states that "Mistral AI is authorized to process the Personal Data as Controller for the purposes of: Training its artificial intelligence models in accordance with its, unless (a) Customer opted-out of training or (b) uses a Mistral AI Product that is opted-out by default and has not opted-in."

This opt-out structure represents a significant transparency consideration. Customers must actively disable training usage rather than explicitly consenting to it.

Both providers focus on protecting customer data submitted through their APIs rather than documenting the provenance of their pre-training datasets. For teams sourcing training data rather than using inference services, these DPAs address only part of the compliance picture.

Scale AI & Gauge: hybrid platforms

Scale AI and Gauge position themselves as full-stack providers serving AI labs, governments, and Fortune 500 companies. Scale AI states that its cloud platform's infrastructure and operations are certified compliant with industry best practice standards and frameworks.

Gauge similarly emphasizes that its platform is certified compliant with industry best practice standards. Both providers highlight SOC 2 compliance and enterprise security measures.

However, certification of infrastructure differs from documentation of dataset provenance. A BCG and MIT Sloan report found that "more than half (55%) of all AI-related failures stem from third-party AI tools" and "a fifth (20%) of organizations that use third-party AI tools fail to evaluate the risks at all."

Buyers should distinguish between platform security certifications and dataset-level compliance documentation when evaluating these providers.

Voice & annotation specialists

Voices.com positions itself around ethical sourcing, stating: "We follow a framework based on consent, compensation, and control. Our process meets global privacy standards like GDPR and CCPA." Their talent pool spans over 100 languages and accents across 160+ countries.

Trint emphasizes that "unlike other AI transcription solutions, we never listen to your recordings to train our algorithms." The company holds ISO 27001 and Cyber Essentials certifications with options for EU or US data storage.

Label Your Data is described as a leading multimodal annotation vendor on G2 and Clutch for flexibility, compliance, and transparent pricing, with certifications including SOC 2, ISO 27001, HIPAA, and GDPR.

These specialists demonstrate stronger alignment with GDPR requirements for specific modalities, though buyers should still request DPIA documentation and consent audit trails rather than relying solely on certification claims.

How to vet a data partner for GDPR & AI Act readiness?

The Alliance for Responsible Data Collection recommends that "all data collection activities must comply with applicable laws" and that organizations "maintain a program to oversee and monitor data collection processes and conduct periodic reviews of data collection practices."

A practical due-diligence checklist should include:

Request DPIAs: Ask for recent Data Protection Impact Assessments covering collection, annotation, and distribution workflows

Verify lawful basis registers: Demand documentation showing the legal basis for processing each data modality

Review transfer mechanisms: For global datasets, confirm current SCCs or BCRs with supplementary measures

Audit consent trails: Request sample consent documentation and verification processes

Evaluate transparency artifacts: Look for AIDTI-style dataset cards or equivalent documentation

BCG research indicates that "organizations that employ seven different methods are more than twice as likely to uncover AI failures compared with those that use only three (51% versus 24%)." This suggests comprehensive vetting across multiple compliance dimensions significantly reduces risk.

The EU AI Act mandates "impact assessments on fundamental individual rights, adopting processes to minimize bias in AI outputs and disclosing AI use to customers and regulators." Data partners should demonstrate readiness for these requirements even before full enforcement.

What's next: EU AI Act & global convergence on data governance

The regulatory landscape continues to evolve rapidly. The European Commission's guidelines help identify whether a model qualifies as a general-purpose AI model if the computational resources used for training exceed 10^23 floating point operations. Models trained with compute exceeding 10^25 FLOP are presumed to have systemic risk.

The AI Act requires providers of general-purpose AI models to "document technical information about their models for the purpose of providing that information upon request to the AI Office and national competent authorities and making it available to downstream providers."

Key compliance dates are approaching:

- 2 August 2025: Obligations for providers of GPAI models enter into application

- 2 August 2026: Commission enforcement powers enter into application

- 2 August 2027: Providers of GPAI models placed on market before 2025 must comply

Data providers that cannot demonstrate compliance with current GDPR requirements will likely struggle with the additional transparency and documentation obligations under the AI Act.

Key takeaways for building safe, future-proof AI models

Compliance transparency separates mature data providers from those creating downstream legal exposure. When evaluating partners, prioritize those who can demonstrate:

- Verifiable consent mechanisms with audit trails

- Current DPIAs covering their specific collection workflows

- Active transfer safeguards for cross-border data

- Dataset cards or transparency reports accessible to customers

Luel operates a two-sided AI training data marketplace connecting AI teams with a global network of vetted contributors. The platform provides rights-cleared multimodal training data with full provenance, sourcing from vetted contributors while maintaining consent logs and cross-checking every file for duplicates, safety issues, and instruction compliance. Consent releases, PII audits, and audit logging are built into every dataset.

For AI teams building multimodal models, the question is no longer whether compliance documentation matters but whether your current data partners can provide it. The providers who treat transparency as a core capability rather than an afterthought will define the next generation of responsible AI development.

Frequently Asked Questions

What is GDPR-compliant multimodal data?

GDPR-compliant multimodal data refers to datasets that not only meet high-quality standards but also adhere to privacy regulations like GDPR and the AI EU Act. This includes having a valid legal basis for data collection, conducting impact assessments, and ensuring data protection during cross-border transfers.

Why is GDPR important for AI pipelines?

GDPR is crucial for AI pipelines because it ensures that personal data used in training AI models is handled lawfully and ethically. Non-compliance can lead to significant fines and legal issues, especially since AI models often process personal data in complex ways that require careful legal consideration.

What are the key compliance pillars for AI data providers?

AI data providers should demonstrate compliance through lawful basis documentation, Data Protection Impact Assessments (DPIAs), cross-border transfer safeguards, and transparency reporting. These pillars ensure that data is collected and processed legally and ethically.

How does Luel ensure GDPR compliance in its data marketplace?

Luel ensures GDPR compliance by providing rights-cleared multimodal training data with full provenance. The platform maintains consent logs, conducts PII audits, and cross-checks every file for duplicates and safety issues, integrating consent releases and audit logging into every dataset.

What should buyers look for in a GDPR-compliant data provider?

Buyers should look for providers that offer verifiable consent mechanisms, current DPIAs, active cross-border data transfer safeguards, and accessible transparency reports or dataset cards. These elements indicate a provider's commitment to compliance and transparency.

Sources

- https://www.gartner.com/en/articles/ai-ready-data

- https://www.gartner.com/en/newsroom/press-releases/2022-05-31-gartner-identifies-top-five-trends-in-privacy-through-2024

- https://ico.org.uk/for-organisations/uk-gdpr-guidance-and-resources/artificial-intelligence/guidance-on-ai-and-data-protection/how-do-we-ensure-lawfulness-in-ai/

- https://www.edpb.europa.eu/news/news/2024/edpb-opinion-ai-models-gdpr-principles-support-responsible-ai_en

- https://www.edps.europa.eu/data-protection-impact-assessment-dpia_en

- https://www.edpb.europa.eu/news/news/2025/edpb-publishes-final-version-guidelines-data-transfers-third-country-authorities-and_en

- https://www.forrester.com/report/the-forrester-data-and-ai-governance-model/RES184942

- https://openai.com/policies/data-processing-addendum/

- https://scale.com/

- https://gauge.to/

- https://www.voices.com/solutions/ai-voice-datasets

- https://trint.com/security

- https://www.gartner.com/en/articles/eu-ai-act-compliance

- https://artificialintelligenceact.eu/chapter/8/

- https://www.bundesnetzagentur.de/DE/Fachthemen/Digitales/KI/_functions/EU-Leitlinien.pdf?__blob=publicationFile&v=2

- https://commission.europa.eu/law/law-topic/data-protection/international-dimension-data-protection_en

- https://luel.ai