GDPR compliance checklist for off-the-shelf egocentric video datasets

Ensure GDPR compliance for egocentric video datasets with our comprehensive checklist, minimizing legal risks for AI projects.

Egocentric video datasets containing faces trigger GDPR's special-category data rules, requiring explicit consent and Data Protection Impact Assessments before use. The ICO confirms biometric data is personal information requiring full compliance, with explicit consent likely the most appropriate condition for processing head-mounted camera footage that captures bystanders' identifying features.

TLDR

• Head-mounted cameras create "mobile surveillance systems" that capture biometric data requiring GDPR Article 9 compliance and explicit consent from filmed individuals

• Six lawful bases exist for processing: consent, contract, legal obligation, vital interests, public task, and legitimate interests

• DPIAs must be completed before acquiring datasets, documenting nature, scope, risks, and mitigation measures

• Technical safeguards include face blurring, anonymization, retention limits, and secure cross-border transfer mechanisms

• Vendors should provide consent documentation, de-identification logs, and clear controller/processor agreements

• Luel offers rights-cleared datasets with consent releases, PII audits, and built-in audit logging for compliance

Head-mounted camera footage has moved squarely into GDPR's high-risk zone. Regulators now treat first-person video as a form of systematic monitoring that can capture faces, voices, license plates, and other biometric identifiers without the filmed individuals ever knowing. For AI teams licensing off-the-shelf egocentric datasets, that classification creates real legal exposure.

This guide delivers a practical GDPR compliance checklist that de-risks egocentric video datasets and keeps your AI projects moving. You will learn why point-of-view footage triggers special-category rules, how to run a Data Protection Impact Assessment before procurement, and what to look for when vetting dataset vendors.

Why do AI teams need a GDPR compliance checklist for head-mounted video?

"Systematic automated monitoring of a specific space by optical or audio-visual means, mostly for property protection purposes, or to protect individual's life and health, has become a significant phenomenon of our days," according to the EDPB video surveillance guidelines. That language applies just as forcefully to egocentric datasets recorded through smart glasses, GoPros, or research headsets.

Unlike stationary CCTV, a body-worn camera turns the wearer into a mobile surveillance system. The ICO warns that such devices "can effectively turn the wearer into a mobile surveillance system that is likely to capture the personal data of passers-by," highlighting unique data protection challenges. Bystanders filmed in public have no opportunity to consent, yet their faces and identifying details may end up in a dataset sold across borders.

Biometric data is personal information, and the ICO states plainly that organisations "must comply with data protection law when you process it." That obligation applies regardless of whether you collected the footage yourself or licensed it from a third party.

Why are egocentric videos considered special-category biometric data under GDPR?

GDPR Article 9 restricts processing of special-category data, which includes biometric information used to uniquely identify a person. When a head-mounted camera captures a face at close range, and that image later feeds a recognition model, the footage meets the regulation's definition of biometric data.

The ICO notes that "explicit consent is likely to be the most appropriate condition available to you to process special category biometric data." Consent must be freely given, specific, informed, and unambiguous. Relying on vague terms buried in a dataset license will not satisfy that standard.

The EDPB reinforces the point: "The use of biometric data and in particular facial recognition entail heightened risks for data subjects' rights," as stated in their guidelines on video devices. When video devices monitor large public areas, regulators expect a DPIA before any processing begins. The EDPB confirms that "if video devices are being used to monitor a large public area, a data protection impact assessment (DPIA) must be carried out," per Article 35(3)(c).

The 9-point GDPR compliance checklist for egocentric video

The ICO defines a DPIA as "a process to help you identify and minimise the data protection risks of a project." For egocentric datasets, that process should start before you sign any licensing agreement and continue through deployment. Below is a step-by-step checklist.

Your DPIA must:

- Describe the nature, scope, context, and purposes of the processing

- Assess necessity, proportionality, and compliance measures

- Identify and assess risks to individuals

- Identify any additional measures to mitigate those risks

People retain specific rights over their data, "including rights to be informed, to access, to rectify (correct), to erase, to restrict, to port (move) and to object," as the ICO's research exemptions guidance explains.

1. Identify lawful basis & Article 9 condition

GDPR provides six lawful bases for processing personal information: consent, contract, legal obligation, vital interests, public task, and legitimate interests. For special-category biometric data, you also need an Article 9 condition.

The ICO advises that "you must break down and separate each distinct processing operation, and identify the purpose and an appropriate lawful basis for each one, in order to comply with the principle of lawfulness." Consent may work when you have a direct relationship with data subjects. Legitimate interests may offer more flexibility during experimentation, but only after a balancing test shows that individual rights are protected.

2. Run a Data Protection Impact Assessment

UK government guidance recommends that DPIAs be carried out when:

- Cameras are added, removed, or moved

- Systems are upgraded

- Biometric capabilities such as facial recognition are in use

— GOV.UK surveillance camera guidance

A DPIA should begin early in the life of a project. The ICO advises starting "before you start your processing, and run alongside the planning and development process." If you identify a high risk that you cannot mitigate, you must consult the ICO before proceeding.

Checklist items 3 through 9:

| Step | Action |

|---|---|

| 3. Collect explicit consent or document legitimate interest | Ensure consent is specific, informed, and revocable |

| 4. De-identify or anonymise footage | Blur faces, license plates, and other PII |

| 5. Set retention limits | Delete data when no longer necessary for purpose |

| 6. Secure cross-border transfers | Use Standard Contractual Clauses where required |

| 7. Define controller and processor roles | Document responsibilities in written contracts |

| 8. Enable subject-rights workflows | Allow access, rectification, and erasure requests |

| 9. Audit vendors for transparency and DPIA readiness | Request evidence of compliance before procurement |

Key takeaway: Complete the DPIA before acquiring a dataset, not after.

Which technical safeguards minimise privacy risk in POV datasets?

Anonymisation and de-identification sit at the heart of GDPR compliance for video. The EGO4D consortium provides a useful benchmark: "Videos were de-identified, removing personally identifiable information, including, for example, blurring bystanders' faces or passing license plate numbers," according to their privacy and ethics statement.

Researchers have developed adversarial training methods that remove privacy-sensitive information while preserving action-detection performance. One approach "use[s] an adversarial training setting in which two competing systems fight: (1) a video anonymizer that modifies the original video to remove privacy-sensitive information while still trying to maximize spatial action detection performance, and (2) a discriminator that tries to extract privacy-sensitive information from the anonymized videos," as described in UC Davis research.

More recent work introduces diffusion-based inpainting that synthesises new facial identities while preserving age, gender, pose, and expression. The AnonNET framework "de-identif[ies] facial videos, while preserving age, gender, race, pose, and expression of the original video," responding to GDPR and the EU AI Act's "strict constraints on collection, processing, and dissemination of personal data, including biometric identifiers such as images and videos of the human face," per ICCV 2025 research.

Best-practice safeguards include:

- Pixel-level face anonymisation before distribution

- Separate storage of raw data and biometric templates

- Access controls tied to signed license agreements

- Regional storage compliant with GDPR and local requirements

How to vet dataset vendors: Appen vs. a transparency-first approach

Before procuring any AI dataset, the ICO expects "full consideration of the controller, processor or joint controller relationship throughout the supply chain in the use of AI systems." Without that clarity, "all parties will fail to meeting their obligations under the UK GDPR," according to ICO contracts guidance.

Appen states that it adheres "to GDPR principles as it applies to our 1 million+ contributors that make up our crowd offering," per its data security page. The company holds SOC2 Type II attestation and ISO 27001:2013 certification. Yet its public documentation stops short of disclosing how it obtains Article 9 conditions for biometric video, whether DPIAs are conducted for specific datasets, or how data-subject rights are honoured after footage enters a customer's pipeline.

A transparency-first vendor should provide:

- Evidence of explicit consent or a documented lawful basis for each dataset

- A completed DPIA available on request

- De-identification logs and QA audit trails

- Clear controller/processor designations in the licensing contract

- A subject-rights workflow that reaches back to original contributors

Without those artefacts, buyers inherit compliance risk that could surface months or years later.

What licensing and cross-border clauses keep POV data lawful?

Cross-border data transfers require additional safeguards. The European Commission adopted new Standard Contractual Clauses in June 2021, and "agreements to transfer data concluded after 27 September 2021 must be based on the new SCCs," according to the Commission's Q&A overview. The SCCs require a transfer impact assessment to verify that destination-country laws do not prevent compliance.

Emerging frameworks such as the AI Privacy License offer machine-readable terms that specify "how, where, and if [data] may be used in AI systems, especially for training or fine-tuning large models," as described on the AI Privacy License website. Embedding such licenses in dataset manifests can automate compliance checks at ingestion time.

Non-commercial research datasets often restrict use to academic purposes. The Waymo Open Dataset terms state plainly: "You may not use the Dataset in any manner that violates applicable privacy laws," and Waymo "takes reasonable care to remove or hide personally identifiable information," per its terms page. Buyers must confirm that their intended commercial application falls within the licence scope.

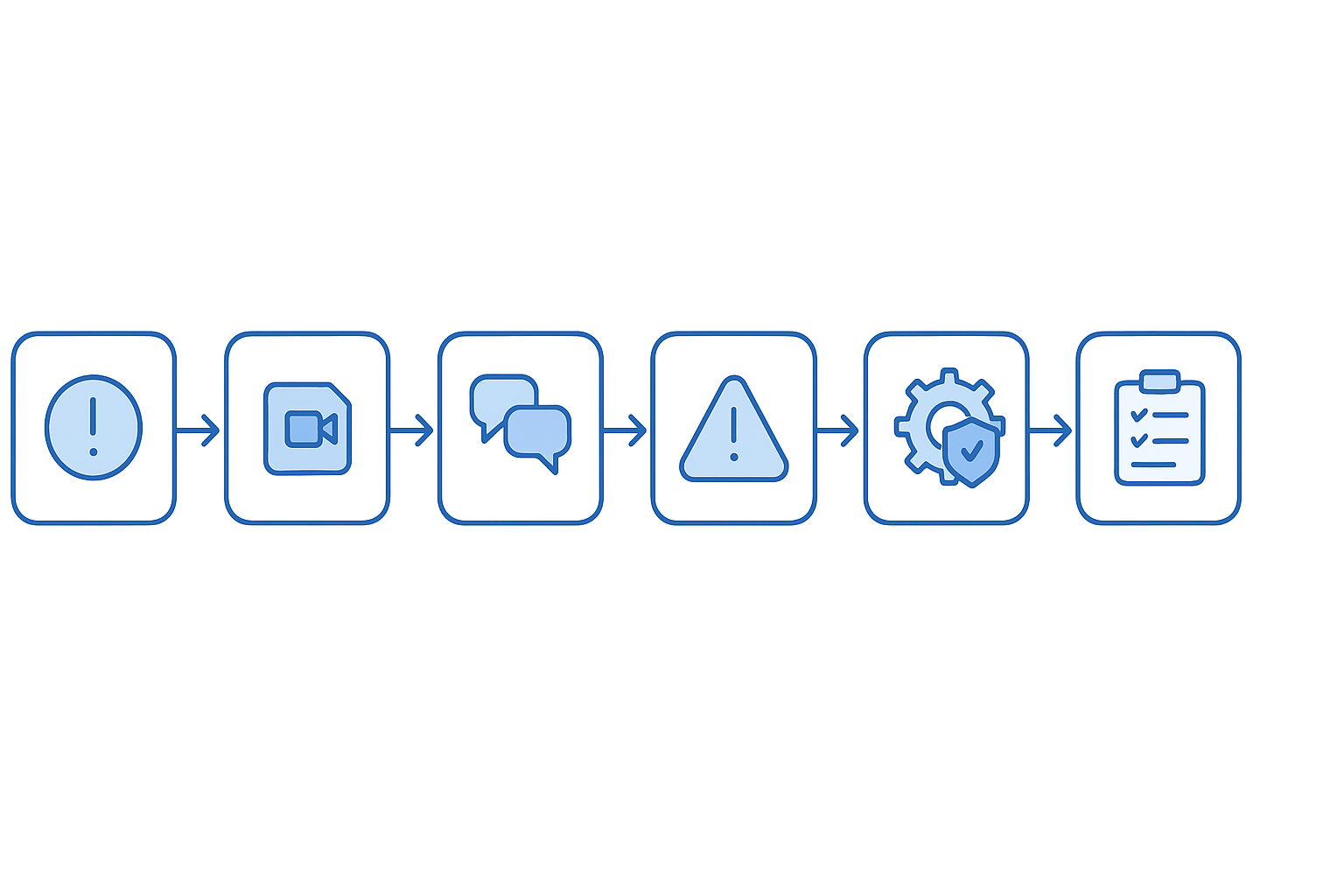

DPIA-first implementation workflow for egocentric data

A DPIA is "one of the ways that a data controller can check and demonstrate that their processing of personal data is compliant with the General Data Protection Regulation (GDPR) and the Data Protection Act (DPA) 2018," according to GOV.UK surveillance guidance.

The ICO outlines a seven-step process:

- Identify the need for a DPIA – Determine whether the processing is likely high risk.

- Describe the processing – Document nature, scope, context, and purposes.

- Consult individuals – Seek views of data subjects or their representatives.

- Assess necessity and proportionality – Confirm no less intrusive alternative exists.

- Identify and assess risks – Consider physical, emotional, and material harms.

- Identify mitigating measures – Options include anonymisation, reduced retention, and access controls.

- Integrate outcomes into the project plan – "You must integrate the outcomes of your DPIA into your project plans," the ICO states.

If you have a Data Protection Officer, the ICO requires that "you must ask for their advice on your DPIA, and document it as part of the process." Consult the ICO when a high risk remains after mitigation. The Information Commissioner will respond within six weeks, or up to an additional month for complex cases, as noted in law enforcement DPIA guidance.

Key takeaways & where Luel can help

Off-the-shelf egocentric video datasets offer speed and scale, but they carry GDPR obligations that cannot be delegated away. Every buyer should:

- Classify the footage as special-category biometric data when faces are identifiable

- Complete a DPIA before procurement

- Verify that the vendor provides explicit consent documentation, de-identification logs, and a clear controller/processor agreement

- Use SCCs for cross-border transfers and confirm destination-country adequacy

- Build subject-rights workflows into the data pipeline

Luel's AI training data marketplace was designed with these requirements in mind. Every dataset is rights-cleared, quality-audited, and delivered with "consent releases, PII audits, and audit logging baked in," as noted on the Luel datasets page. JSON manifests include clip metadata, transcripts, and QA scores, giving compliance teams the provenance they need to demonstrate accountability.

If your next project involves egocentric video, start with the checklist above, then explore how a transparency-first data partner can remove the guesswork from GDPR compliance.

Frequently Asked Questions

What is the GDPR compliance checklist for egocentric video datasets?

The GDPR compliance checklist for egocentric video datasets includes steps like conducting a Data Protection Impact Assessment (DPIA), obtaining explicit consent, de-identifying footage, and ensuring secure cross-border data transfers. It helps AI teams mitigate legal risks associated with processing biometric data.

Why are egocentric videos considered special-category biometric data under GDPR?

Egocentric videos are considered special-category biometric data under GDPR because they can capture biometric identifiers like faces, which are used to uniquely identify individuals. This classification requires explicit consent and adherence to strict processing conditions under GDPR Article 9.

What technical safeguards can minimize privacy risks in POV datasets?

Technical safeguards for POV datasets include anonymization techniques like blurring faces and license plates, using adversarial training to remove privacy-sensitive information, and employing diffusion-based inpainting to synthesize new facial identities while preserving key attributes.

How does Luel ensure GDPR compliance for its datasets?

Luel ensures GDPR compliance by providing rights-cleared datasets with consent releases, PII audits, and audit logging. Their datasets come with JSON manifests that include metadata, transcripts, and QA scores, offering transparency and accountability for compliance teams.

What should be included in a Data Protection Impact Assessment (DPIA)?

A DPIA should describe the nature, scope, context, and purposes of data processing, assess necessity and proportionality, identify risks to individuals, and propose measures to mitigate those risks. It is crucial for ensuring GDPR compliance when processing high-risk data like egocentric videos.

Sources

- https://ico.org.uk/for-organisations/uk-gdpr-guidance-and-resources/lawful-basis/biometric-data-guidance-biometric-recognition/

- https://ico.org.uk/for-organisations/uk-gdpr-guidance-and-resources/lawful-basis/biometric-data-guidance-biometric-recognition/how-do-we-process-biometric-data-lawfully

- https://www.edpb.europa.eu/sites/default/files/files/file1/edpb_guidelines_201903_video_devices.pdf

- https://dataprotection.ie/en/dpc-guidance/guidance-body-worn-cameras-or-action-cameras

- https://www.rpclegal.com/snapshots/data-protection/new-edpb-guidelines-on-processing-personal-data-through-video-devices/

- https://ico.org.uk/for-organisations/uk-gdpr-guidance-and-resources/accountability-and-governance/guide-to-accountability-and-governance/data-protection-impact-assessments/

- https://ico.org.uk/for-organisations/uk-gdpr-guidance-and-resources/the-research-provisions/exemptions/

- https://ico.org.uk/for-organisations/uk-gdpr-guidance-and-resources/artificial-intelligence/guidance-on-ai-and-data-protection/how-do-we-ensure-lawfulness-in-ai/?search=DPIA

- https://www.gov.uk/government/publications/data-protection-impact-assessments-for-surveillance-cameras

- https://ego4d-data.org/pdfs/Ego4D-Privacy-and-ethics-consortium-statement.pdf

- https://ui.adsabs.harvard.edu/abs/2018arXiv180311556R/abstract

- https://openaccess.thecvf.com/content/ICCV2025W/CV4BIOM/papers/Egin_Now_You_See_Me_Now_You_Dont_A_Unified_Framework_ICCVW_2025_paper.pdf

- https://ico.org.uk/for-organisations/advice-and-services/audits/data-protection-audit-framework/toolkits/artificial-intelligence/contracts-and-third-parties

- https://www.appen.com/data-security

- https://commission.europa.eu/law/law-topic/data-protection/international-dimension-data-protection/new-standard-contractual-clauses-questions-and-answers-overview_en

- https://www.aiprivacylicense.com/

- https://www.waymo.com/open/terms

- https://ico.org.uk/for-organisations/uk-gdpr-guidance-and-resources/accountability-and-governance/data-protection-impact-assessments-dpias/how-do-we-do-a-dpia

- https://luel.ai