Rights-cleared off-the-shelf egocentric video datasets for enterprise AI

Explore the importance of rights-cleared egocentric video datasets for enterprise AI, highlighting compliance, legal risks, and best practices.

Rights-cleared egocentric video datasets are first-person video collections with explicit licensing for AI training and commercial use. For enterprise AI teams, these datasets are essential after compliance fines and copyright lawsuits exposed risks of training on unvetted footage. While Appen offers extensive catalogs, their provenance documentation lacks the consent trails and usage rights that regulators increasingly demand for personal video collections.

Key Facts

• Legal compliance is mandatory: GDPR requires data minimization and informed consent for video processing, with enterprises facing mounting lawsuits from creators whose works appear in generative AI outputs

• Ego4D sets the standard: With 3,670 hours from 923 participants across 74 locations, it's 20x larger than prior collections and includes full consent documentation

• Hidden costs multiply quickly: Unclear provenance triggers expenses beyond licensing fees, including legal defense, re-labeling, and model retraining when problematic clips must be removed

• Emerging datasets expand options: Ego-Exo4D V2 (1,422 hours), EgoDex (829 hours), and OpenEgo (1,107 hours) all provide documented licensing and consent trails

• Current AI struggles with video understanding: State-of-the-art models achieve less than 33% accuracy on EgoSchema benchmark, where random guessing yields 20%

Rights-cleared egocentric video datasets are first-person video collections whose owners have explicitly licensed the footage for AI training and commercial use. For enterprise AI teams, these datasets have become non-negotiable after a wave of compliance fines and copyright lawsuits exposed the dangers of training on unvetted internet scraps. Yet not every vendor documents provenance with equal rigor. Appen, for example, offers an extensive catalog but leaves enterprises guessing about the consent trails and usage rights behind individual clips. This gap matters because regulators and customers increasingly demand proof that personal video was collected lawfully.

This article unpacks why traceable egocentric footage is now table-stakes, examines the legal risks of unclear provenance, compares Appen's documentation limits with best-practice suppliers, and highlights emerging datasets that set a higher bar for enterprise compliance.

From internet scraps to rights-cleared footage: the new data mandate

A rights-cleared egocentric video dataset is a collection of first-person recordings whose owners have explicitly granted permission for AI training and commercial distribution. Licenses such as Ego4D's grant a "non-exclusive, non-transferable license to use the Database solely for the Purpose," covering activities from academic publication to training machine learning models, while the licensor retains database ownership. Detailed consent logs, de-identification steps, and usage clauses eliminate downstream legal risk for enterprises building computer-vision systems.

The business push for traceable video is accelerating. "Data is critical to AI, but more time and resources are often invested in model development than data quality," notes Google's People + AI Guidebook. High-quality data must be accurately representing a real-world phenomenon, collected responsibly, reproducible, and reusable across relevant applications. The world's largest egocentric dataset, Ego4D, exemplifies this standard with 3,600 hours of densely narrated video captured by 926 unique camera wearers from 74 worldwide locations and 9 countries. The approach to collection was designed to uphold rigorous privacy and ethics standards with consenting participants and robust de-identification procedures.

For enterprise AI teams, the mandate is clear: data provenance is no longer optional. Publicly trained models have run afoul of laws regarding copyright and fair use, with lawsuits mounting from those who see their own works reflected in generative outputs. Investing in rights-cleared footage upfront is cheaper than defending a lawsuit later.

What are the legal and reputational risks of unclear provenance?

The risks extend far beyond bad press. Regulators demand that personal data be "adequate, relevant and limited to what is necessary in relation to the purposes for which they are processed," a principle GDPR calls data minimisation. Video surveillance systems collect massive amounts of personal data which may reveal highly personal information and even special categories of data, such as biometric identifiers.

NIST's AI Risk Management Framework for Generative AI lists four primary considerations: Governance, Content Provenance, Pre-deployment Testing, and Incident Disclosure. The framework warns that "challenges with risk estimation are aggravated by a lack of visibility into GAI training data" and the immature state of AI measurement. Without a paper trail, enterprises face risks ranging from confabulation and data privacy breaches to intellectual property disputes.

Public trust hinges on lawful, fair, and transparent surveillance practices. The UK Information Commissioner's Office emphasizes that "the public must have confidence that the use of surveillance systems is lawful, fair, transparent and meets the other standards set in data protection law." AI-based surveillance systems can process sensitive categories of personal data, making compliance even more critical.

Relevant standards (GDPR, NIST AI-RMF) at a glance

| Framework | Key Clause | Enterprise Implication |

|---|---|---|

| GDPR | Data minimisation, informed consent, right to erasure | Video must be captured in controlled environments with consent or PII must be blurred in public settings |

| NIST AI-RMF 1.0 | Voluntary, rights-preserving, non-sector-specific, use-case agnostic | AI systems must be valid, reliable, safe, secure, accountable, transparent, and fair |

| EDPB Guidelines 3/2019 | Processing personal data through video devices | Applies to any functioning camera processing personal data; requires separate justification for disclosure to law enforcement |

These frameworks converge on a single point: enterprises must document where their video came from, who consented, and how it will be used.

How does Appen's provenance gap compare to rights-cleared marketplaces?

Appen advertises an "extensive catalog of over 270 audio, image, video and text datasets in over 80 languages." The company emphasizes AI data quality as a key differentiator, stating that it establishes a high foundational level of quality with proprietary assets and unique analytics capabilities. Techniques such as Inter-Rater Agreements and tools like Model Mate help ensure label consistency.

However, Appen's public documentation does not detail the provenance of individual datasets. Enterprises cannot easily trace consent logs, de-identification procedures, or source licensing for specific clips. This opacity creates risk: "less than 33% of datasets are restrictively licensed, over 80% of the source content in widely used text, speech, and video datasets carry non-commercial restrictions," according to a comprehensive audit of nearly 4,000 public datasets.

By contrast, rights-cleared marketplaces such as Luel deliver every collection with consent releases, PII audits, and audit logging baked in. Luel sources from vetted contributors, maintains consent logs, and cross-checks every file for duplicates, safety issues, and instruction compliance. The difference is not just documentation depth; it is the ability to defend a dataset in court.

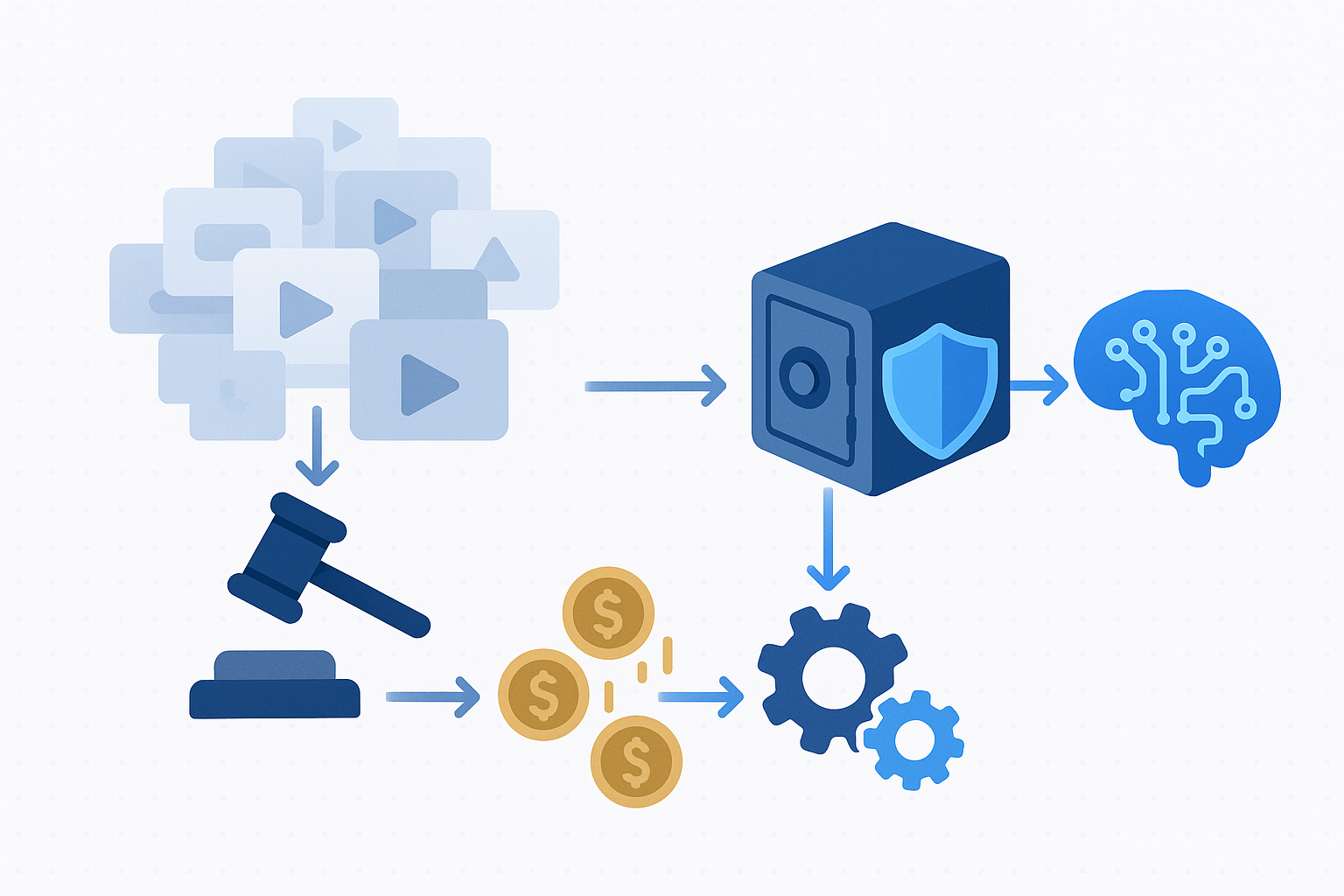

Hidden remediation costs of unclear rights

Choosing a dataset with murky provenance can trigger expenses that dwarf the original licensing fee:

Legal fees: Copyright and fair use lawsuits are mounting, with publicly trained models facing challenges from content creators who see their works reflected in generative outputs.

Re-labeling: If problematic clips must be removed, annotation work is wasted. "As free text becomes less of a competitive differentiator in the coming years, owning proprietary content sources will become all the more valuable."

Model re-training: Progress in AI is driven largely by the scale and quality of training data. A lack of thorough data analysis has led to challenges including privacy issues, retracting datasets with harmful content, benchmark contamination, and copyright disputes.

Key takeaway: The upfront cost of a rights-cleared dataset is almost always lower than the combined expense of litigation, re-labeling, and re-training.

Why is Ego4D considered the gold standard for documented provenance?

Ego4D sets the benchmark for enterprise-grade egocentric video because it combines scale with transparency. The dataset consists of 3,670 hours of video collected by 923 unique participants from 74 worldwide locations in 9 countries, making it more than 20x larger than any prior egocentric collection.

From the outset, privacy and ethics standards were critical to this data collection effort. "612 hours of the EGO4D dataset contains video where participants consented to remain unblurred," the project notes. Elsewhere, video was captured in controlled environments with informed consent or in public settings where faces and other PII are blurred. The approach was designed to uphold rigorous privacy and ethics standards with consenting participants and robust de-identification procedures.

The Ego4D license grants a non-exclusive, non-transferable right to use the database for purposes including distributing images in publications, training machine learning models to detect or classify objects and activities, and creating annotations. End users retain intellectual property rights in all software, algorithms, and models developed from the database, while the licensor retains ownership of the underlying data.

What does the Ego4D license allow?

The "Purpose" clause in the Ego4D license explicitly permits:

Distributing or reproducing images and videos in academic or commercial publications

Using the database to develop, train, evaluate, or improve software, algorithms, or ML models designed to:

- Detect, classify, recognize, retrieve, or understand objects, events, places, or activities

- Model or reconstruct 3D objects or environments

- Improve virtual reality or augmented reality applications

Creating and distributing annotations to any images or videos in the database

Any publication based on the database must include a standardized citation referencing the Ego4D Consortium. This transparency allows enterprises to audit their training data and demonstrate compliance to regulators.

Which new rights-cleared egocentric datasets should enterprises watch?

Beyond Ego4D, several emerging datasets offer documented provenance and diverse modalities:

Ego-Exo4D V2: Released with 1,286.30 video hours (221.26 ego-hours) across 5,035 takes. More than 800 participants from 13 cities worldwide performed activities in 131 natural scene contexts, yielding 1,422 hours of video combined. The dataset includes 7-channel audio, IMU, eyegaze, SLAM camera footage, and 3D environment point clouds.

EgoDex: The largest dexterous manipulation dataset to date, with 829 hours of egocentric video paired with 3D hand and finger tracking data. It consists of 338,000 task demonstrations across 194 tabletop manipulation tasks. EgoDex is publicly available under a CC-by-NC-ND license.

OpenEgo: A multimodal egocentric manipulation dataset totaling 1,107 hours across six public datasets, covering 290 manipulation tasks in 600+ environments. It features standardized hand-pose annotations and intention-aligned action primitives.

Each dataset emphasizes clear licensing and consent documentation, making them suitable for enterprise AI projects that must withstand regulatory scrutiny.

Long-form reasoning benchmarks (EgoSchema, EgoTempo)

Enterprises building video understanding models should track these evaluation suites:

| Benchmark | Source | Scale | Key Metric |

|---|---|---|---|

| EgoSchema | Derived from Ego4D | Over 5,000 human-curated Q&A pairs spanning 250+ hours | Accuracy (top score 74.5 vs. ~76% human baseline) |

| EgoTempo | Egocentric video | Emphasizes tasks requiring integration across the entire video | Temporal reasoning accuracy |

Current state-of-the-art models achieve less than 33% accuracy on EgoSchema, where random guessing yields 20%. This gap underscores the difficulty of long-form video understanding and the value of high-quality, rights-cleared training data.

EgoTempo emphasizes tasks that require integrating information across the entire video, ensuring that models rely on temporal patterns rather than static cues. Extensive experiments show that current MLLMs still fall short in temporal reasoning on egocentric videos.

How Luel accelerates compliant data sourcing

Luel operates a two-sided AI training data marketplace that connects AI teams with a global network of vetted contributors to provide fast, rights-cleared multimodal data at scale. The platform offers curated datasets and custom data collection services focusing on video, audio, and voice recordings.

Every collection is rights-cleared, quality audited, and delivered with enterprise support. Luel delivers JSON manifests with clip metadata, transcripts, QA scores, and direct S3 download links. Consent releases, PII audits, and audit logging are baked in for every dataset.

For teams requiring egocentric vision data, Luel offers categories such as Egocentric Vision for Accessibility AI, with options for custom augmentation including annotations and translations. The platform distinguishes itself by cutting out slow vendor processes while ensuring data compliance and diversity.

Key takeaways for data-hungry AI teams

Enterprise AI projects live or die by the quality and legality of their training data. Here is what matters:

Demand full provenance: Rights-cleared datasets with documented consent logs, de-identification procedures, and explicit licensing protect against legal and reputational risk.

Audit your vendors: Appen's catalog is broad, but its provenance documentation leaves gaps. Marketplaces like Luel provide the paper trail enterprises need.

Benchmark wisely: Datasets like Ego4D, Ego-Exo4D, EgoDex, and OpenEgo set the standard for scale and transparency. Evaluation suites such as EgoSchema and EgoTempo help measure real-world performance.

Factor in hidden costs: Legal fees, re-labeling, and re-training can dwarf the price of a properly documented dataset purchased upfront.

For teams building next-generation multimodal AI, Luel offers a direct path to rights-cleared, quality-audited egocentric video with the documentation required to satisfy regulators and customers alike.

Frequently Asked Questions

What are rights-cleared egocentric video datasets?

Rights-cleared egocentric video datasets are collections of first-person video recordings where the owners have explicitly licensed the footage for AI training and commercial use, ensuring compliance with legal standards and reducing the risk of copyright issues.

Why is data provenance important for enterprise AI?

Data provenance is crucial for enterprise AI as it ensures that the data used is legally obtained and compliant with regulations, reducing the risk of legal issues and enhancing the trustworthiness of AI models.

How does Appen's documentation compare to rights-cleared marketplaces?

Appen's documentation lacks detailed provenance information, making it difficult for enterprises to verify consent and usage rights. In contrast, rights-cleared marketplaces like Luel provide comprehensive consent logs and compliance documentation.

What are the legal risks of using datasets with unclear provenance?

Using datasets with unclear provenance can lead to legal issues such as copyright infringement and data privacy violations, resulting in costly lawsuits and reputational damage for enterprises.

How does Luel ensure compliance in data sourcing?

Luel ensures compliance by providing rights-cleared, quality-audited datasets with detailed consent logs, PII audits, and audit logging, making it easier for enterprises to meet regulatory requirements.

Sources

- https://ego4d-data.org/docs/privacy

- https://deloitte.com/za/en/Industries/tmt/research/taking-control.html

- https://ego4d-data.org/pdfs/Ego4D-Licenses-Draft.pdf

- https://pair.withgoogle.com/guidebook-v2/chapter/data-collection/

- https://ego4d-data.org/docs/

- https://www.edpb.europa.eu/sites/default/files/files/file1/edpb_guidelines_201903_video_devices.pdf

- https://ai.universityofcalifornia.edu/_files/documents/riskmanagementgenerativeai.pdf

- https://ico.org.uk/for-organisations/uk-gdpr-guidance-and-resources/cctv-and-video-surveillance/guidance-on-video-surveillance-including-cctv

- https://tsapps.nist.gov/publication/get_pdf.cfm?pub_id=936225

- https://www.appen.com/resources

- https://www.appen.com/ai-data-quality

- https://arxiv.org/abs/2310.16787

- https://www.cbinsights.com/research/ai-training-data-market-map/

- https://docs.ego-exo4d-data.org/

- https://arxiv.org/html/2505.11709v1

- https://arxiv.org/abs/2509.05513

- https://llmdb.com/benchmarks/egoschema

- https://arxiv.org/abs/2503.13646

- https://luel.ai/

- https://www.luel.ai/enterprise